Watch 10 video solutions for Convert 1D Array Into 2D Array, a easy level problem involving Array, Matrix, Simulation. This walkthrough by NeetCodeIO has 8,192 views views. Want to try solving it yourself? Practice on FleetCode or read the detailed text solution.

You are given a 0-indexed 1-dimensional (1D) integer array original, and two integers, m and n. You are tasked with creating a 2-dimensional (2D) array with m rows and n columns using all the elements from original.

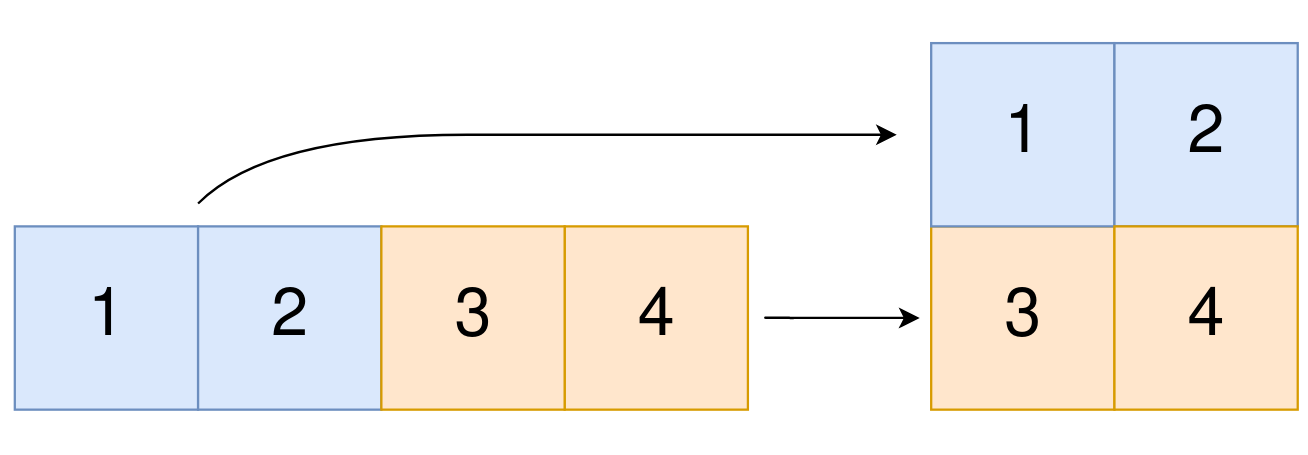

The elements from indices 0 to n - 1 (inclusive) of original should form the first row of the constructed 2D array, the elements from indices n to 2 * n - 1 (inclusive) should form the second row of the constructed 2D array, and so on.

Return an m x n 2D array constructed according to the above procedure, or an empty 2D array if it is impossible.

Example 1:

Input: original = [1,2,3,4], m = 2, n = 2 Output: [[1,2],[3,4]] Explanation: The constructed 2D array should contain 2 rows and 2 columns. The first group of n=2 elements in original, [1,2], becomes the first row in the constructed 2D array. The second group of n=2 elements in original, [3,4], becomes the second row in the constructed 2D array.

Example 2:

Input: original = [1,2,3], m = 1, n = 3 Output: [[1,2,3]] Explanation: The constructed 2D array should contain 1 row and 3 columns. Put all three elements in original into the first row of the constructed 2D array.

Example 3:

Input: original = [1,2], m = 1, n = 1 Output: [] Explanation: There are 2 elements in original. It is impossible to fit 2 elements in a 1x1 2D array, so return an empty 2D array.

Constraints:

1 <= original.length <= 5 * 1041 <= original[i] <= 1051 <= m, n <= 4 * 104Problem Overview: You receive a 1D array original and two integers m and n. The goal is to reshape the array into an m x n matrix using all elements in row-major order. If the number of elements in original is not exactly m * n, constructing such a matrix is impossible and you return an empty matrix.

The problem focuses on simple indexing logic and matrix construction using concepts from array and matrix manipulation. Since each element is processed exactly once, the challenge is mainly about mapping indices correctly.

Approach 1: Using Direct Index Mapping (O(n) time, O(m*n) space)

Create a result matrix with m rows and n columns. Iterate through the original array once and compute the target position for each element using index math. For an index i in the 1D array, the row becomes i / n and the column becomes i % n. This mapping converts the linear index into a 2D coordinate following row-major order. The approach avoids nested loops and directly calculates the correct cell for each element. Time complexity is O(n) where n is the length of the input array, and space complexity is O(m*n) for the constructed matrix.

Approach 2: Iterative Filling (O(n) time, O(m*n) space)

Another straightforward method is to build the matrix row by row. First check if original.length == m * n. If valid, iterate through the array while maintaining a pointer. For each row, fill n elements sequentially before moving to the next row. This simulates how a matrix is naturally filled in row-major order and is often easier to reason about during implementation. The algorithm still processes each element exactly once, giving O(n) time complexity and O(m*n) space complexity. This method is commonly described as a simple simulation of matrix construction.

Recommended for interviews: Direct index mapping is typically the preferred explanation. It shows you understand how linear indices translate into matrix coordinates and demonstrates comfort with array math. The iterative filling approach is equally efficient and easier to read, but interviewers often appreciate the concise index-mapping insight.

| Approach | Time | Space | When to Use |

|---|---|---|---|

| Direct Index Mapping | O(n) | O(m*n) | Best general solution. Efficient and shows clear understanding of row/column index math. |

| Iterative Filling | O(n) | O(m*n) | Good when writing simple readable code or explaining matrix construction step-by-step. |