We have n jobs, where every job is scheduled to be done from startTime[i] to endTime[i], obtaining a profit of profit[i].

You're given the startTime, endTime and profit arrays, return the maximum profit you can take such that there are no two jobs in the subset with overlapping time range.

If you choose a job that ends at time X you will be able to start another job that starts at time X.

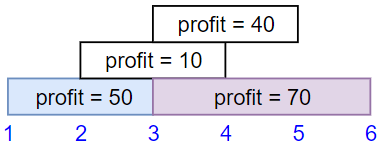

Example 1:

Input: startTime = [1,2,3,3], endTime = [3,4,5,6], profit = [50,10,40,70] Output: 120 Explanation: The subset chosen is the first and fourth job. Time range [1-3]+[3-6] , we get profit of 120 = 50 + 70.

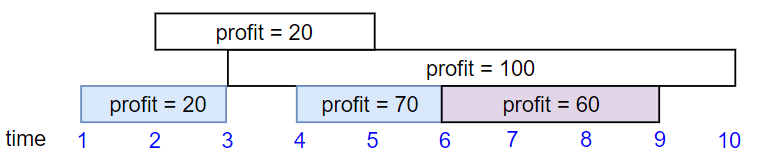

Example 2:

Input: startTime = [1,2,3,4,6], endTime = [3,5,10,6,9], profit = [20,20,100,70,60] Output: 150 Explanation: The subset chosen is the first, fourth and fifth job. Profit obtained 150 = 20 + 70 + 60.

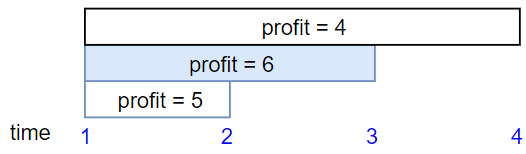

Example 3:

Input: startTime = [1,1,1], endTime = [2,3,4], profit = [5,6,4] Output: 6

Constraints:

1 <= startTime.length == endTime.length == profit.length <= 5 * 1041 <= startTime[i] < endTime[i] <= 1091 <= profit[i] <= 104Problem Overview: You receive three arrays representing job startTime, endTime, and profit. Each job must finish before another begins. The goal is to select a subset of non-overlapping jobs that produces the maximum total profit.

Approach 1: Dynamic Programming with Binary Search (O(n log n) time, O(n) space)

Start by combining the inputs into job tuples and sorting them by startTime (or endTime). The key idea is to treat this as a weighted interval scheduling problem. For each job, you decide whether to skip it or take it and jump to the next non-overlapping job. Instead of scanning linearly to find that next job, use binary search on the sorted start times to locate the first job whose start time is greater than or equal to the current job’s end time.

Define a dp[i] value representing the maximum profit achievable starting from job i. The transition becomes dp[i] = max(dp[i+1], profit[i] + dp[nextIndex]), where nextIndex is found using binary search. Sorting plus binary search reduces the transition lookup from O(n) to O(log n), giving an overall time complexity of O(n log n). This approach relies heavily on Sorting, Binary Search, and Dynamic Programming. It scales well to large job lists and is the standard optimal solution.

Approach 2: Dynamic Programming with Linear Scan (O(n^2) time, O(n) space)

This version keeps the same dynamic programming idea but replaces the binary search step with a linear scan. After sorting jobs by start time, iterate through the remaining jobs to find the next job that starts after the current job ends. The recurrence is identical: choose the maximum between skipping the current job or taking its profit and adding the result from the next compatible job.

The implementation is straightforward because it avoids binary search and extra indexing structures. However, the search for the next valid job may take up to O(n) time for each state, producing a total time complexity of O(n^2). Space complexity remains O(n) for the DP array. This approach works well when input sizes are small or when you want a simpler baseline solution before optimizing.

Recommended for interviews: The expected solution is dynamic programming with binary search. It demonstrates understanding of weighted interval scheduling and shows you can optimize DP transitions using sorted structures. Starting with the linear scan version can help explain the intuition, but converting the lookup to binary search is what interviewers typically want to see.

This approach leverages sorting, dynamic programming, and binary search to solve the problem efficiently.

endTime.dp where dp[i] stores the maximum profit we can achieve by considering the first i jobs.The solution begins by sorting the jobs in increasing order of their end times. This allows us to consider each job and decide whether to include it based on the results of previously solved subproblems stored in the dp array.

For each job, we perform a binary search using bisect_right to find the index of the last job that doesn't conflict with the current one, and update the maximum profit accordingly.

Time Complexity: O(n log n) due to sorting and binary search.

Space Complexity: O(n) for the dp array.

This approach uses dynamic programming to solve the problem by scanning jobs and keeping track of maximum profits without the need of an auxiliary data structure for overlap checking. Instead of binary search, it uses a precomputed array to track the last non-overlapping job for each job.

endTime.The Python solution sorts jobs by endTime and iterates through each job, computing the maximum profit by considering two cases: including or excluding the current job. It utilizes a nested loop to find the last non-overlapping job in an optimized way, considering constraints on input sizes.

Python

JavaScript

Time Complexity: O(n^2) due to the nested loop search for non-overlapping jobs.

Space Complexity: O(n) for the dp array.

First, we sort the jobs by start time in ascending order, then design a function dfs(i) to represent the maximum profit that can be obtained starting from the i-th job. The answer is dfs(0).

The calculation process of function dfs(i) is as follows:

For the i-th job, we can choose to do it or not. If we don't do it, the maximum profit is dfs(i + 1); if we do it, we can use binary search to find the first job that starts after the end time of the i-th job, denoted as j, then the maximum profit is profit[i] + dfs(j). We take the larger of the two. That is:

$

dfs(i)=max(dfs(i+1),profit[i]+dfs(j))

Where j is the smallest index that satisfies startTime[j] \ge endTime[i].

In this process, we can use memoization search to save the answer of each state to avoid repeated calculations.

The time complexity is O(n times log n), where n$ is the number of jobs.

Python

Java

C++

Go

TypeScript

We can also change the memoization search in Solution 1 to dynamic programming.

First, sort the jobs, this time we sort by end time in ascending order, then define dp[i], which represents the maximum profit that can be obtained from the first i jobs. The answer is dp[n]. Initialize dp[0]=0.

For the i-th job, we can choose to do it or not. If we don't do it, the maximum profit is dp[i]; if we do it, we can use binary search to find the last job that ends before the start time of the i-th job, denoted as j, then the maximum profit is profit[i] + dp[j]. We take the larger of the two. That is:

$

dp[i+1] = max(dp[i], profit[i] + dp[j])

Where j is the largest index that satisfies endTime[j] leq startTime[i].

The time complexity is O(n times log n), where n$ is the number of jobs.

Similar problems:

| Approach | Complexity |

|---|---|

| Dynamic Programming with Binary Search | Time Complexity: |

| Dynamic Programming With Linear Scan | Time Complexity: |

| Memoization Search + Binary Search | — |

| Dynamic Programming + Binary Search | — |

| Approach | Time | Space | When to Use |

|---|---|---|---|

| Dynamic Programming with Binary Search | O(n log n) | O(n) | Best general solution for large inputs. Efficiently finds the next compatible job using binary search. |

| Dynamic Programming with Linear Scan | O(n^2) | O(n) | Simpler implementation. Useful for understanding the DP transition before optimizing. |

| Brute Force Recursion | O(2^n) | O(n) | Conceptual baseline that tries every subset of jobs. Quickly becomes impractical for large n. |

Maximum Profit in Job Scheduling - Leetcode 1235 - Python • NeetCodeIO • 55,650 views views

Watch 9 more video solutions →Practice Maximum Profit in Job Scheduling with our built-in code editor and test cases.

Practice on FleetCodePractice this problem

Open in Editor